AI

AIWhere is DeepSeek-v4? Inside the Delay of the AI World’s Most Anticipated Disruptor

As frustration mounts over a missed Lunar New Year launch, technical leaks and geopolitical shifts suggest a bigger game is being played.

In the fast-moving world of artificial intelligence, a two-week delay can feel like a lifetime. For developers waiting on DeepSeek-v4, the silence since the Lunar New Year has turned excitement into what many are calling "hype-exhaustion." While the lab’s previous models shook the industry with their efficiency, the wait for their latest flagship is now tangled in a complex web of technical testing and global trade tensions.

The Specs and the Economic Stakes

The anticipation isn't just about another chatbot; it is about a shift in the economics of intelligence. Rumors suggest DeepSeek-v4 will be a 1-trillion parameter Mixture-of-Experts (MoE) model, priced at a staggering $0.27 per million tokens. This makes it roughly 20 to 40 times cheaper than Western counterparts like Claude Opus, threatening to turn high-level reasoning into a commodity.

Beyond the price, the technical targets are aggressive. Internal leaks point to a 90% score on HumanEval and a massive 1-million-token context window. This would allow the model to ingest entire codebases at once, effectively moving from a simple chat interface to an integrated "AI engineer" capable of understanding complex repository structures.

A "silent update" on February 11 gave users a taste of this future. The DeepSeek app was refreshed to support 1-million tokens and a knowledge cutoff of May 2025. While this wasn't the full v4 release, it signaled that the underlying architecture for long-context reasoning is already live and undergoing final "grayscale" testing.

Geopolitical Hardball and Hardware

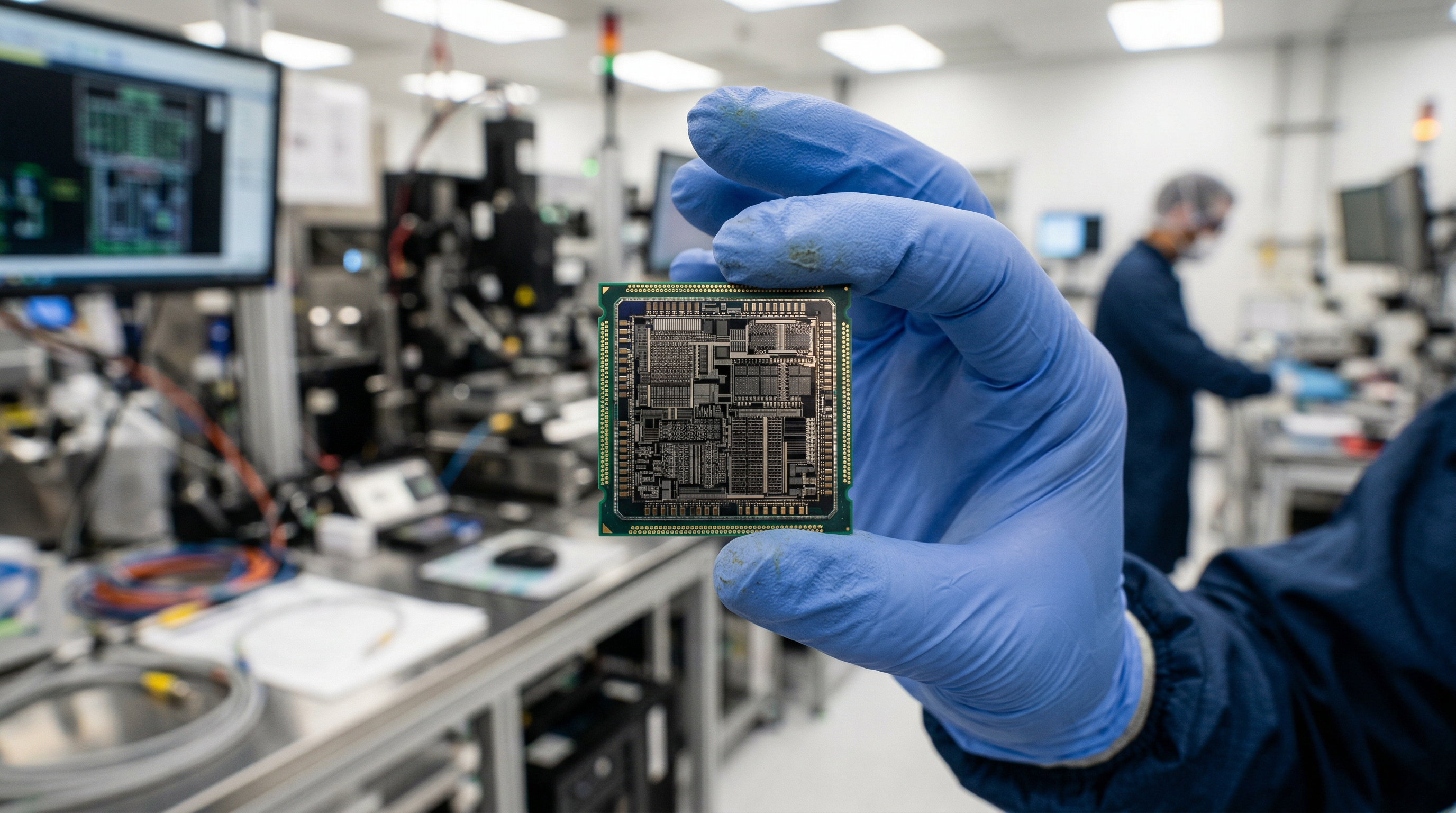

While developers wait for a download link, the geopolitical backdrop of the release is becoming increasingly tense. In a move that surprised many, DeepSeek reportedly withheld v4 from U.S. chipmakers like Nvidia and AMD for pre-release optimization. Instead, early access was granted to domestic suppliers like Huawei, a strategic decision likely intended to give Chinese hardware a "head start" in optimizing for frontier-level models.

This "Nvidia snub" aligns with a broader strategy of technological independence. By prioritizing domestic infrastructure, the lab is positioning v4 as more than just a software product—it is a symbol of national pride. This is underscored by reports that the model was trained on a cluster of Nvidia Blackwell chips in Inner Mongolia, a feat that suggests a significant bypass of existing U.S. export controls.

Despite the delays and the server latency issues that often plague the China-based service, the hype remains resilient. The current community consensus has shifted toward a launch on March 3, coinciding with the Lantern Festival. Whether this date holds or not, DeepSeek-v4 is already serving as a catalyst for a new era of AI, where cost and geopolitical alignment matter as much as raw performance.