AI

AIPowering the Frontier: Inside the $300 Billion Vera Rubin Expansion

NVIDIA and OpenAI commit to a massive 10-gigawatt infrastructure project to define the next decade of AI.

In the world of artificial intelligence, power is the new currency. A recent strategic expansion between NVIDIA and OpenAI has moved beyond simple chip orders into the realm of national-scale infrastructure. The two giants are now committed to a project that targets 10 gigawatts of power capacity, roughly the equivalent of five Hoover Dams, to fuel the next generation of 'frontier' models.

The Architecture of the Vera Rubin Era

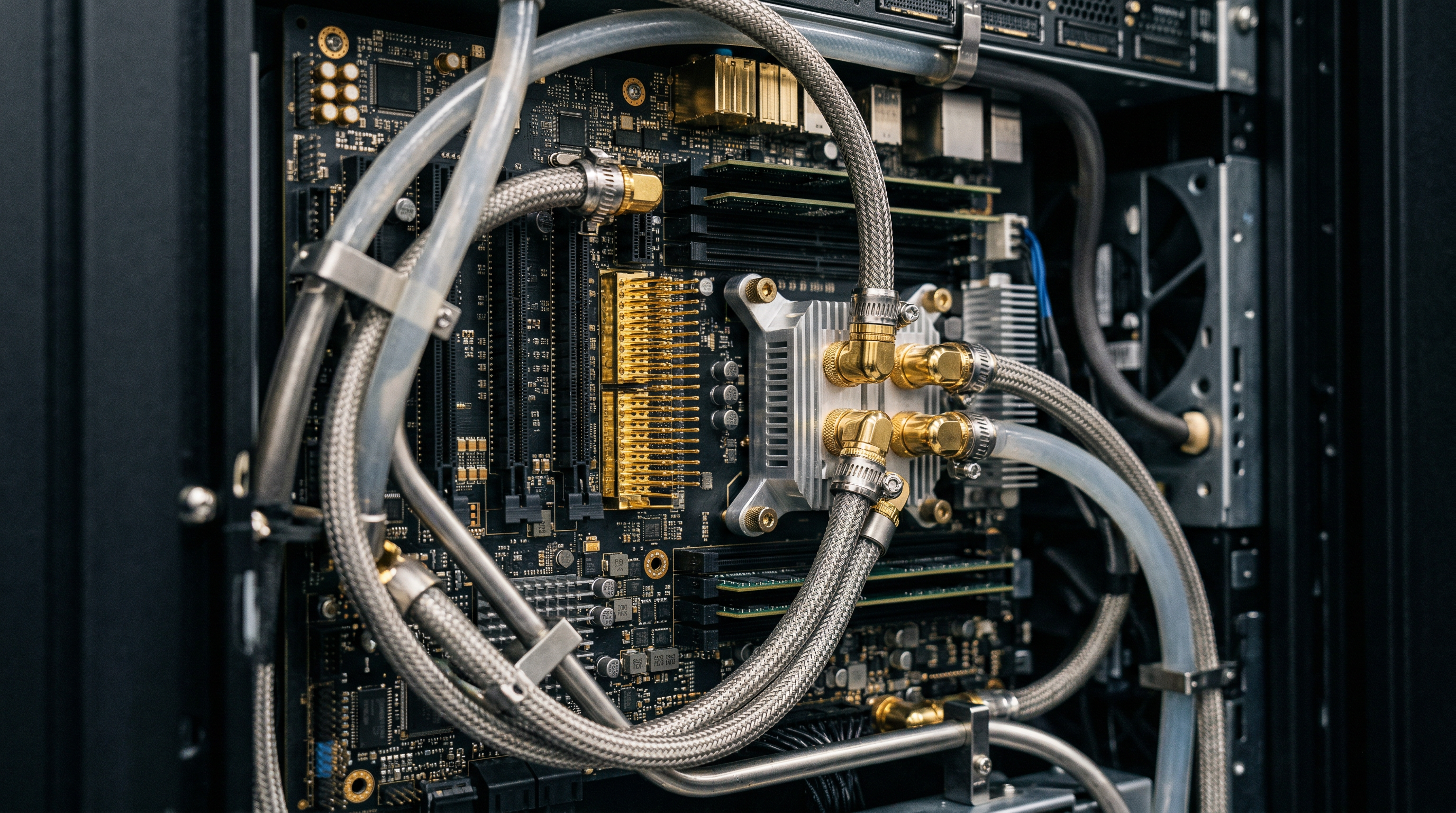

At the heart of this expansion is the Vera Rubin platform, named after the pioneering astronomer. This is not just a faster chip; it represents a shift from building individual processors to designing entire data centers as a single unit. The system is built around the Vera CPU—an 88-core Arm-based processor—and the Rubin GPU, which utilizes next-generation HBM4 memory to move data at unprecedented speeds.

For OpenAI, the performance gains are transformative. The Vera Rubin architecture provides up to five times the AI compute power of its predecessor, the Blackwell series. More importantly, it aims for a tenfold reduction in the cost of inference—the process of an AI model actually answering a user's prompt. By making intelligence cheaper and faster to deliver, the partnership seeks to make 'agentic reasoning,' or multi-step problem-solving, a standard feature of daily computing.

A single 'NVL72' rack in this setup provides eight exaflops of performance. To put that in perspective, one exaflop is a quintillion calculations per second. This density is necessary because trillion-parameter models require massive amounts of memory bandwidth—roughly 1.7 petabytes per second—to prevent the system from idling while it waits for data to move between components.

Economic Ambition and Physical Reality

The scale of the investment is as staggering as the technology. NVIDIA is investing up to $100 billion in OpenAI, structured at $10 billion for every gigawatt of capacity deployed. When you add the costs of data center shells, cooling systems, and networking, the total price tag for the first five gigawatts is estimated to exceed $300 billion. This 'infrastructure-first' approach reflects Sam Altman’s belief that compute capacity will be the fundamental basis for the future economy.

However, this ambition faces significant real-world hurdles. While the funding is secured—OpenAI recently closed a $110 billion round with a massive contribution from NVIDIA—the physical power grid remains a bottleneck. In many regions, the wait time for a new grid connection of this size can range from two to ten years. This has led OpenAI to diversify its bets, including a $38 billion deal with Amazon to utilize AWS infrastructure alongside NVIDIA’s proprietary hardware.

Critics also point to the risks of 'circular financing.' By investing billions into its own largest customer, NVIDIA ensures its hardware remains the industry standard, but it also creates a closed loop that some analysts find concerning. Regardless of the financial optics, the move signals that the race for AI supremacy is no longer just about who has the best code, but who can secure the most electricity and the most advanced silicon to harness it.