Tech

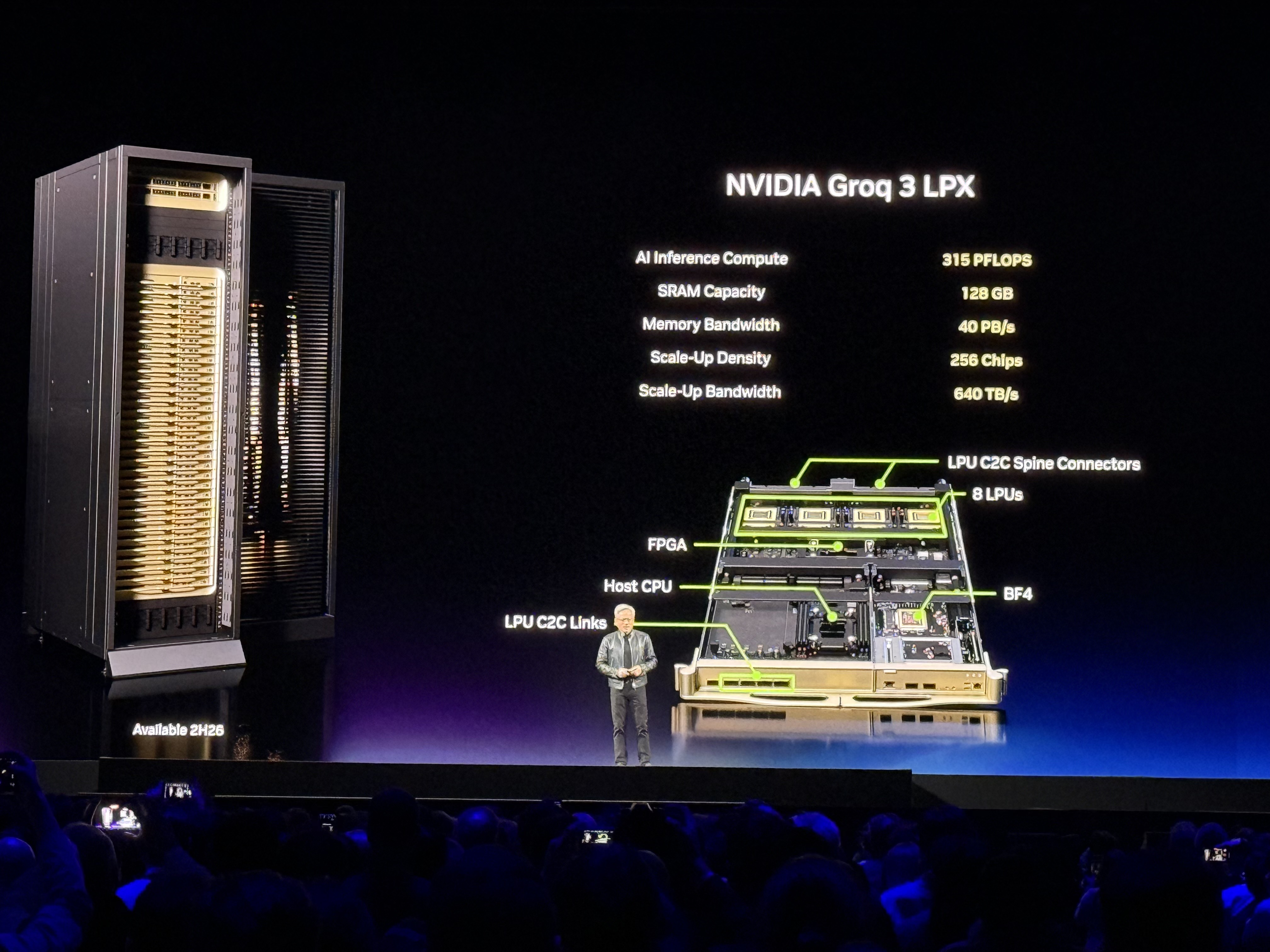

TechNVIDIA Integrates Groq LPUs to Turbocharge Vera Rubin AI Inference

By splitting prefill and decode tasks, the new Vera Rubin platform delivers 35x higher throughput, redefining what is possible for agentic AI.

The biggest obstacle to the next generation of AI isn't raw computing power—it’s waiting for the response. With the unveiling of the Vera Rubin platform, NVIDIA has effectively shattered that barrier by integrating Groq’s LPU technology, achieving a staggering 35x increase in inference throughput. This isn't just an incremental hardware update; it is a fundamental shift in how we build the brains of autonomous agents.

The Power of Division: Prefill vs. Decode

Historically, AI inference was treated as a monolithic task, forcing general-purpose GPUs to handle both the heavy lifting of processing input (prefill) and the sequential generation of tokens (decode). The Vera Rubin architecture changes the game by splitting these duties. The new Rubin GPU, packed with HBM4 memory and delivering 50 petaFLOPS, handles the memory-intensive prefill phase. Once that context is set, the baton is passed to the Groq 3 LPU.

The Groq LPU is a master of latency. By ditching external memory in favor of massive on-chip SRAM, it achieves 150 TB/s of bandwidth per chip, generating tokens at speeds that feel practically instantaneous. By segregating these tasks into a co-designed LPX rack, NVIDIA has managed to squeeze 35 times the inference throughput per megawatt out of the system compared to previous Blackwell setups. It is a classic engineering lesson: don't make one chip do everything; make the right chip do one thing perfectly.

Why Agentic AI Demands This Leap

We are currently shifting from simple chatbots to agentic AI—autonomous programs that execute multi-step workflows. These agents don't just output text; they interact with APIs, analyze files, and make real-time decisions. This requires a level of responsiveness that current hardware simply cannot provide without incurring massive energy costs or agonizing lag. NVIDIA’s $20 billion strategic move to license Groq’s tech acknowledges that the 'memory wall' is the final boss of AI scaling.

Looking ahead, this heterogeneous approach sets the stage for a massive leap in enterprise AI adoption. With hardware availability slated for late 2026, we should expect a surge in AI applications that were previously dismissed as too slow or expensive. For developers and businesses, the takeaway is clear: the era of waiting for tokens is ending, and the era of high-speed, autonomous digital agents is just beginning. We are moving toward a future where compute is no longer a constraint, but a commodity.

NVIDIA Groq Architecture Shift

Keep reading

Tech

TechAnthropic Launches Claude Certified Architect Program to Standardize AI Development

Anthropic's new certification is more than a badge; it is a declaration that building reliable, autonomous AI agents is now a core engineering discipline.

Tech

TechGoogle Transforms Stitch Into Programmable Infrastructure for AI Agents

Google is moving its experimental design tool, Stitch, out of the browser and into the code, signaling a shift toward agent-driven software development.

Tech

TechNvidia Develops Vera Rubin Space-1 Chips for Orbital Data Centers

Nvidia is pioneering orbital computing with the Vera Rubin Space-1, a bold move to solve the cooling crisis by moving data centers off-planet.